How to Orchestrate AI Roles in the Insights Supply Chain

Introduction: AI and the Blurring of Organizational Boundaries

Generative Artificial Intelligence (AI) is blurring the lines between functions in certain enterprises. For example, product designers can now contribute directly to code, while engineers can increasingly participate in product design decisions. In the world of data analytics, generative AI techniques like “conversational analytics” attempt to enable enable greater participation in insights generation by business users who traditionally rely on insights analysts at the end of the Insights Supply Chain (ISC). Using natural language prompts, conversational analytics allows business users to operate upstream in the Insights Supply Chain, directly engaging with insights analytics, data analytics, and even aspects of data engineering.

Centralized Governance, Distributed Execution

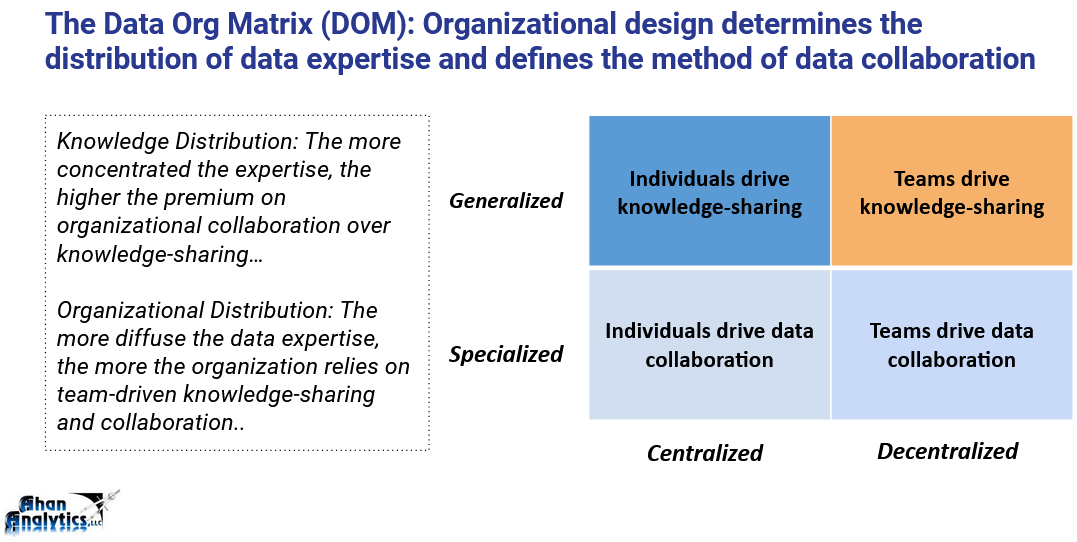

In this era of ever-increasing democratization and decentralization of analytics, David Colwell, VP of AI & ML at Tricentis, makes the case for a hybrid organizational model that centralizes data governance and decentralizes implementation by distributing AI-related expertise across the organization. In a recent interview in Data Camp, Colwell explains “because AI is powerful… everyone is enthusiastic and concerned… you need centralized governance with distributed execution”. This recommendation reveals the need for a nuanced understanding of the Insights Supply Chain. The Data Org Matrix (DOM) is a component of the Insights Supply Chain that maps organizational structure against the distribution of data expertise. Per the Data Org Matrix (DOM), Colwell’s organizational design emphasizes specialized data professionals working in an organization centralized by data governance while perhaps decentralized by organizational functions.

Positioning AI in the Data Org Matrix

Since the organization generates insights and output in a partially decentralized fashion, at first glance, this configuration resembles the lower-right quadrant of the DOM, where execution is decentralized. However, the edicts of standardized data governance create a nuance. Colwell’s organizational model creates an interdependence which effectively requires the organization to coordinate in a centralized manner. For example, if a particular business unit needs to change the definition of a metric or spin up a new data source, it must coordinate with the existing centralized data ecosystem and the data professionals responsible for that ecosystem. In fact, the centralized governance determines the extent to which operations can act in a decentralized fashion by coordinating methods, developing benchmarks for differentiating success from failure, and standardizing roles.

Reconciling AI Roles in the Insights Supply Chain

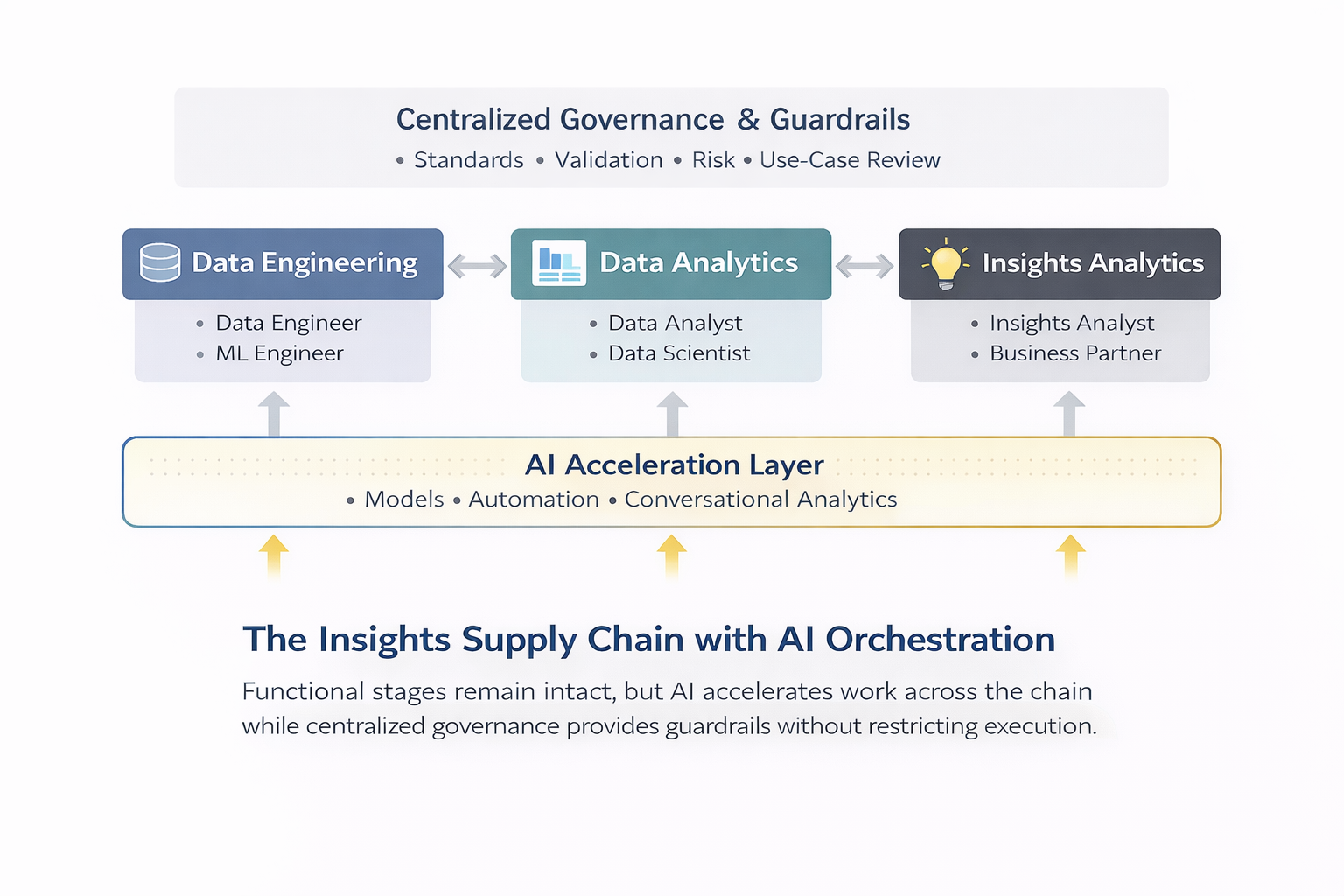

Colwell nearly interchangeably refers to data scientists, machine learning engineers, and AI engineers. The best way to reconcile this usage is to think of these specialists as members of a unified “expert team” or “guild”. They are unified by the need to integrate data, machine learning solutions, customer needs, and AI-driven methodologies to help product teams (who may be distributed across an organization) rapidly develop prototypes. Importantly, Colwell is careful to note that this centralized team does not perform a gatekeeper role. Instead, they serve to accelerate work in the Insights Supply Chain by scaling AI-driven solutions.

Some nuance appears in the focus for each role. The data scientist manages the “new currency” of “gen-AI ready data,” shifting from training models, a task that is now somewhat commoditized, to the critical “data hygiene” and preparation required for fine-tuning models. Machine learning engineers and AI engineers function more as AI architects or AI advisors who teach the organization to manage the probabilistic nature of AI and how to understand AI generated results from prompts and data.

Guardrails, Not Gatekeepers

As AI capabilities diffuse across the organization, governance must shift from control to guardrails.

Colwell points out that these AI-related specialists are particularly tasked with making sure AI does not get used in scenarios where human review is unavailable. The person in the Insights Analyst role must be vigilant about people using AI implementations too far outside of their domain of expertise, like a lay person generating legal advice or a sales person generating user experience designs. The Insights Analyst must prepare and evaluate “recovery mechanisms” for the cases when the AI solution inevitably fails. In other words, the Insights Analyst acts as a kind of knowledge broker in the organization.

In practice, AI-related roles in the ISC increasingly overlap, requiring intentional orchestration rather than rigid separation:

- Data scientists often step into insights analyst responsibilities.

- Data analysts increasingly work alongside data scientists and machine learning engineers on modeling, querying, assessing, and organizing data.

- Machine learning and AI engineers support or absorb data engineering tasks.

When the locus of insights resides with AI, the data scientist must collaborate closely with machine learning and AI engineers, or take on those roles, to deliver insights for business partners. Data organizations can use the ISC to think strategically about how and whether to make these roles distinct. (For example, see “How Artisans Work in the Insights Supply Chain” for a description of how data scientists as artisans can cover the entire flow of the Insights Supply Chain).

AI as an Organizational Accelerator

Colwell speaks from the perspective of a company that appears to make data a core competence. The ISC’s DOM uses “data as a core competence” concept to further clarify the centralized vs decentralized distinction as a strategic choice. In the lower left quadrant, an organization unifies standardized metrics and methods to maximize data’s operational excellence across the company. This arrangement aligns with Colwell’s narrative.

In the lower right quadrant, business units move fast with their own data ecosystems specialized for their purposes. These systems are not shared resources and each business unit owns semantics which do not impact or depend on the semantics of other business units. (See “How Artisans Work in the Insights Supply Chain” for further explanations). This kind of decentralization differs from the tight coordination that Colwell references.

Shifting Upstream: Validation, Risk, and Responsibility

Colwell’s framework informs how AI roles should be orchestrated across the Insights Supply Chain, with more responsibility placed upstream.

Colwell suggests that the ISC will operate faster with data capabilities in the hands of staff outside the data professional groups. AI is not just another tool that increases the productivity of the data professionals in the Insights Supply Chain. Anyone who has access to data experiences increased productivity with AI. This expanded scale and scope compels organizations to increase investment and expertise further upstream in the ISC. Colwell calls this process of pushing expertise further upstream “a shift in testing to the left”. Validation and quality assurance become more critical upstream in the ISC and put a higher premium on successful centralization.

Conversational Analytics and the New Role of the Insights Analyst

AI like conversational analytics also impacts the ISC by shifting upstream the responsibility of the Insights Analyst. They act as “guardians of use cases” to determine if a problem is appropriate for AI. While companies like Looker envision a world where business users are let loose with AI like conversational analytics, Colwell recognizes the need for practicality. He warns that “if your use case cannot tolerate consistent persistent incurable failure then you can’t use AI”. Insights analysts, in coordination with the rest of the ISC, must do that assessment and make transparent where business users might get misled from errors like false positives, incorrect interpretations, and even asking questions that the data ecosystem is not designed to handle.

Conclusion: Orchestrating AI Roles for Scalable Insights

The scale and scope of artificial intelligence make organizational design increasingly critical for data professionals. As AI expands who can generate insights and how quickly they can do so, leaders must intentionally orchestrate roles across the Insights Supply Chain.

The Insights Supply Chain provides a durable framework for this orchestration. The ISC clarifies where to centralize governance, where execution can productively decentralize, and how AI-driven roles – from data scientists to insights analysts – must evolve in synchronicity.

Organizations that treat AI as merely a productivity tool will struggle with scale, risk, and accountability. Organizations will move faster with confidence when they treat AI as an organizational capability that they design, govern, and integrate across the Insights Supply Chain.

The next step is to intentionally design how AI roles operate together. Leaders should use the Insights Supply Chain to audit role boundaries, strengthen upstream guardrails, and deliberately orchestrate AI capabilities where they create the most value.

Need help with your Insights Supply Chain? Contact Ahan Analytics and stay up-to-date with me on Facebook or LinkedIn!